Tactile Feedback in Virtual Reality

Improving Spatial Interaction · Bachelor Thesis @ Airbus Defence and Space

When Touch Meets Virtual Space

Virtual environments are visually immersive, yet often physically detached.

My bachelor thesis explored a central question: Can tactile feedback improve how we perceive, understand, and interact with space in virtual reality (VR)?

A user study was conducted to investigate whether integrating haptic stimuli into spatial interaction tasks could enhance precision, spatial awareness, and user confidence in virtual environments.

Hypotheses (shortened)

The thesis investigated whether adding feedforward vibrotactile directional guidance to spatial interaction tasks:

- H1 Enables relief of the visual channel by reducing cognitive load during interaction.

- H2 Improves interaction performance in terms of task accuracy and execution time.

- H3 Improves spatial multitasking through better attention distribution.

- H4 Ensures usability by providing intuitive, supportive and distraction-free interactions.

Feedforward Vibrotactile Directional Guidance? What is that?

In short, it is a tactile guidance approach that conveys directional information before a user performs

a movement, using vibration patterns to indicate where to move next. Instead of reacting to movements (feedback),

it proactively supports spatial orientation by providing intuitive haptic cues that guide the user toward a target location.

In my study, I implemented this guidance approach in a wearable haptic prototype to investigate whether anticipatory directional vibration

improves spatial interaction in virtual environments. The system was integrated into a VR task in which participants had to locate and

align virtual targets, allowing me to compare performance and subjective experience with and without vibrotactile feedforward cues.

Curious how the prototype looked? Or how it worked?

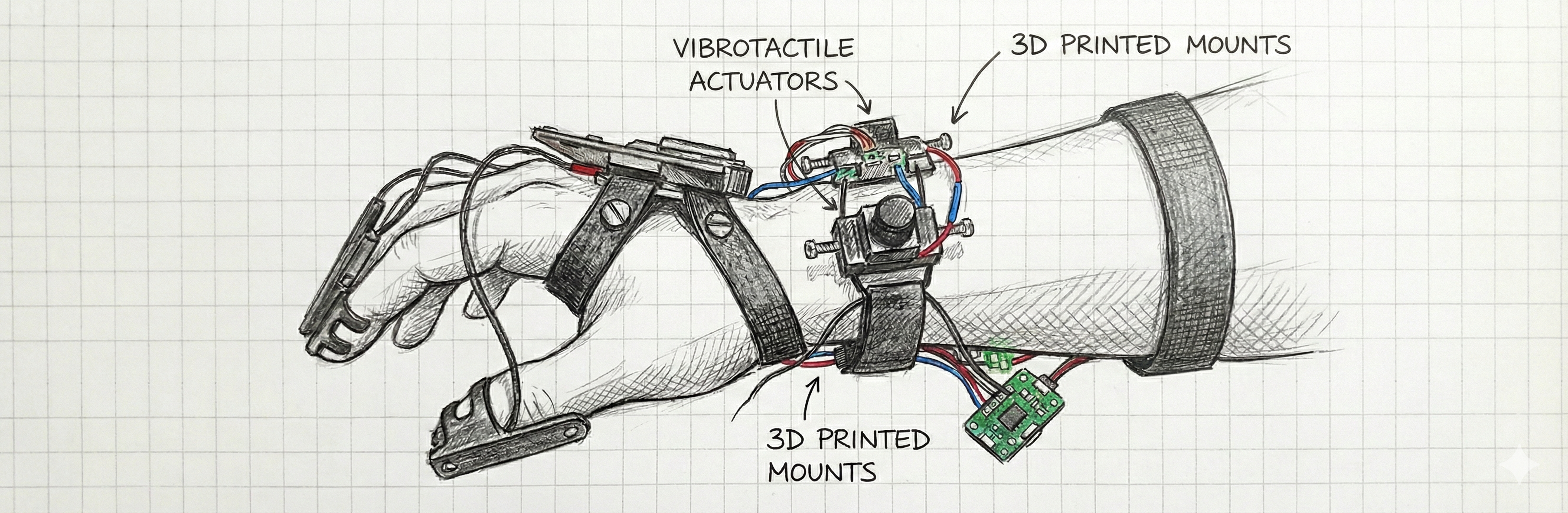

The prototype consisted of a wearable hand-mounted and wrist-mounted system combining vibration motors, a microcontroller,

and custom 3D-printed mounts. Several small vibrotactile actuators were distributed around the hand and wrist so that each motor

corresponded to a specific spatial direction relative to the user’s hand. When a virtual target appeared, the system calculated

the directional vector between the current hand position and the target position, and activated the motor located on the side of

the hand that matched this direction. The 3D-printed mounts ensured stable positioning of the motors against the skin,

enabling clear and localized vibration cues while allowing enough freedom of movement for natural interaction within the VR task.

Conception & System Design

The core idea of the system was not simply to add vibration, but to embed a tactile

information layer into a realistic spatial use case. To evaluate whether feedforward

vibrotactile guidance truly relieves the visual channel, a dual-task scenario was designed

in which users had to monitor a dynamic environment while simultaneously manage spatial object interaction in VR.

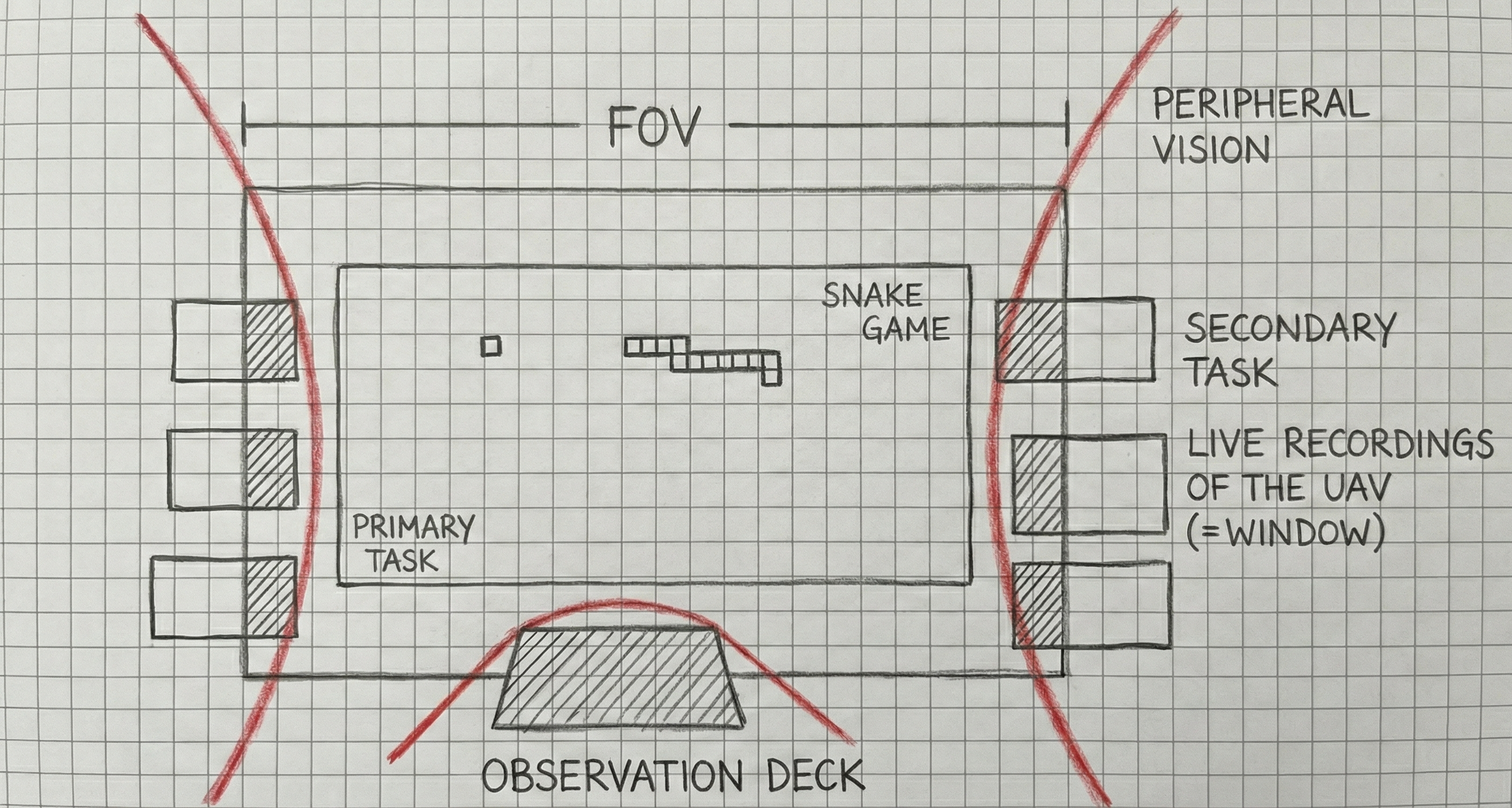

The use case simulated an observation scenario with UAV windows inside a 3D environment.

The primary task required continuous visual monitoring and interaction with a simple snake game,

thereby creating a controlled visual workload. The secondary task required participants to detect,

select, and reposition endangered windows within a defined observation area in 3D space, making the tactile guidance

functionally relevant.

Implementation of the VR Environment

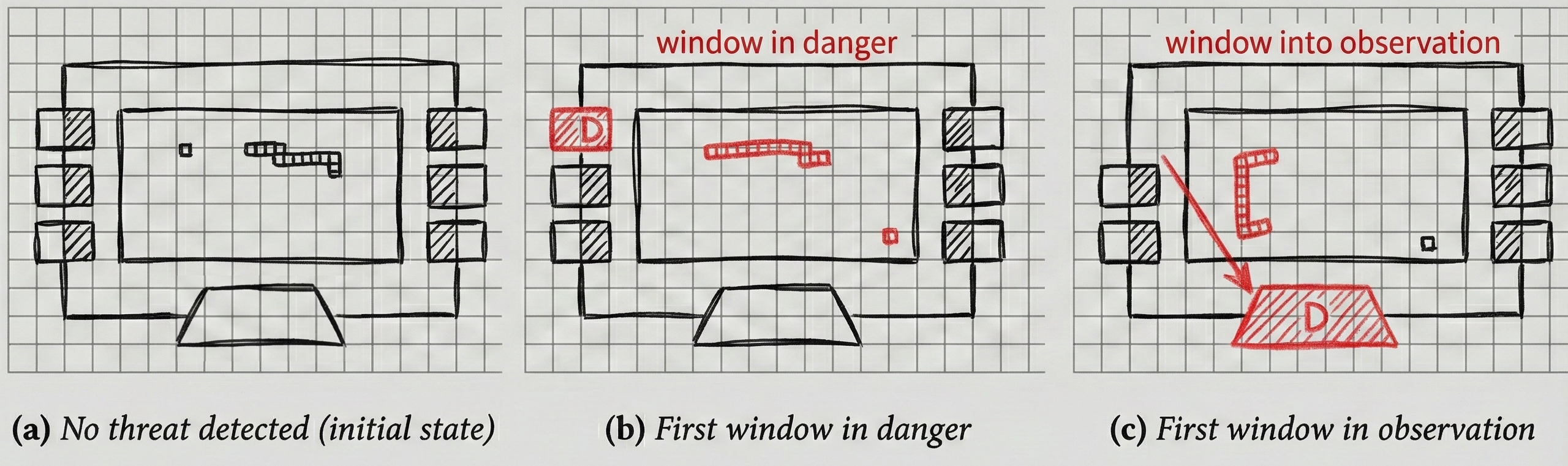

The virtual environment was implemented to reflect a structured observation space

containing multiple UAV windows arranged within a defined area. When a window became

endangered, it visually changed state and required immediate interaction.

As illustrated in Figure (a)-(c), the interaction sequence for endangered windows

involved detection, selection, and precise positioning within the observation area.

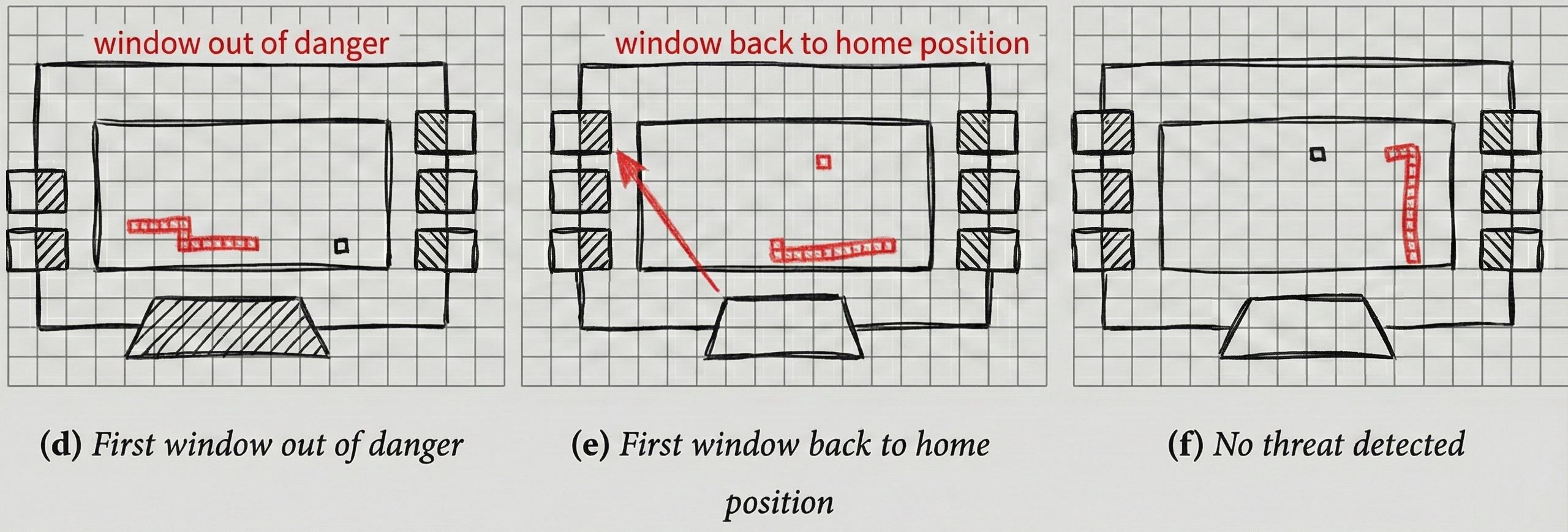

In contrast, Figure (d)-(f) illustrates the interaction sequence for windows that

were no longer endangered. Participants had to detect the state change and return

the window to its predefined home position.

Throughout both interaction flows, the vibrotactile system provided directional

feedforward cues aligned with the target position, supporting spatial orientation

without additional visual indicators.

Methodology

Procedure

Curious about the user study procedure? Then, try hovering over the segments on the timeline!

Measures

Primary Task Performance (Objective)

Score · wall hits

Secondary Task Performance (Objective)

Window placement duration · accuracy · number of misplaced & successfully placed windows

Subjective

Perceived workload · perceived stress · usability

Key Findings

Performance in the primary Snake task remained stable across both conditions,

indicating that the integration of vibrotactile guidance did not interfere with ongoing visual interaction.

In the secondary task, integrating feedforward vibrotactile directional guidance significantly increased the number of successfully placed windows.

Participants also reacted significantly faster to endangered UAV windows when tactile cues were provided.

Subjective measures revealed no significant differences in perceived workload or stress levels between conditions.

Interestingly, several participants reported that the scenario with feedforward vibrotactile guidance felt more stressful because it created a sense of urgency

to react immediately to window state changes. Although the interaction task itself was simplified, the presence of vibration

made the situation feel more demanding yet more supportive, as it alerted participants to required actions.

Without vibrotactile cues, participants reported being afraid of occasionally overlooking endangered windows.

The prototype was perceived as intuitive by the majority of participants (N=14),

and most stated that it did not distract from or restrict their movements (N=11).

Participants described the system as supportive (N=15), particularly because it clearly indicated

when an action was required. Additionally, many participants reported that feedforward

vibrotactile guidance can effectively support spatial interaction in VR (N=10).

Participants also reported that vertical (up/down) cues were more difficult to identify than horizontal (left/right) guidance.

The latter was described as intuitive and easy to distinguish, suggesting anisotropic perceptual sensitivity across spatial axes.

Impact & Takeaways

This study demonstrates that feedforward vibrotactile directional guidance effectively supports

spatial interaction in VR environments without impairing performance in parallel tasks.

Performance in the primary Snake task remained stable, while reaction speed and successful window

placements in the secondary UAV task significantly improved with tactile guidance.

This demonstrates the value of tactile guidance for spatial multitasking scenarios.

Participants perceived the prototype as intuitive and supportive, especially regarding left/right directional cues.

However, limitations also emerged. Vertical guidance was more difficult to discern, the impulses were occasionally

too weak, and the discrete actuator layout reduced directional precision.

Overall, the results emphasize the potential of tactile feedforward systems as a complementary information

channel in VR. They also highlight the importance of more refined actuator placement, stronger stimuli,

and more continuous directional encoding in future iterations.